Gemma 3 is available in four variants with 1 billion, 4 billion, 12 billion, and 27 billion parameters. According to Google, it is the world’s most optimized single-accelerator model, capable of running on a single GPU or TPU, eliminating the need for large-scale computing clusters.

In theory, this means Gemma 3 could run directly on the Tensor Processing Unit (TPU) of Pixel smartphones, much like how Gemini Nano operates locally on mobile devices.

Compared to Google’s Gemini AI models, Gemma 3’s biggest advantage is its open-source nature, allowing developers to customize, package, and deploy it freely for mobile applications and computer software. Additionally, Gemma supports over 140 languages, with 35 languages already available in pre-trained packages.

A model built for efficiency and high performance

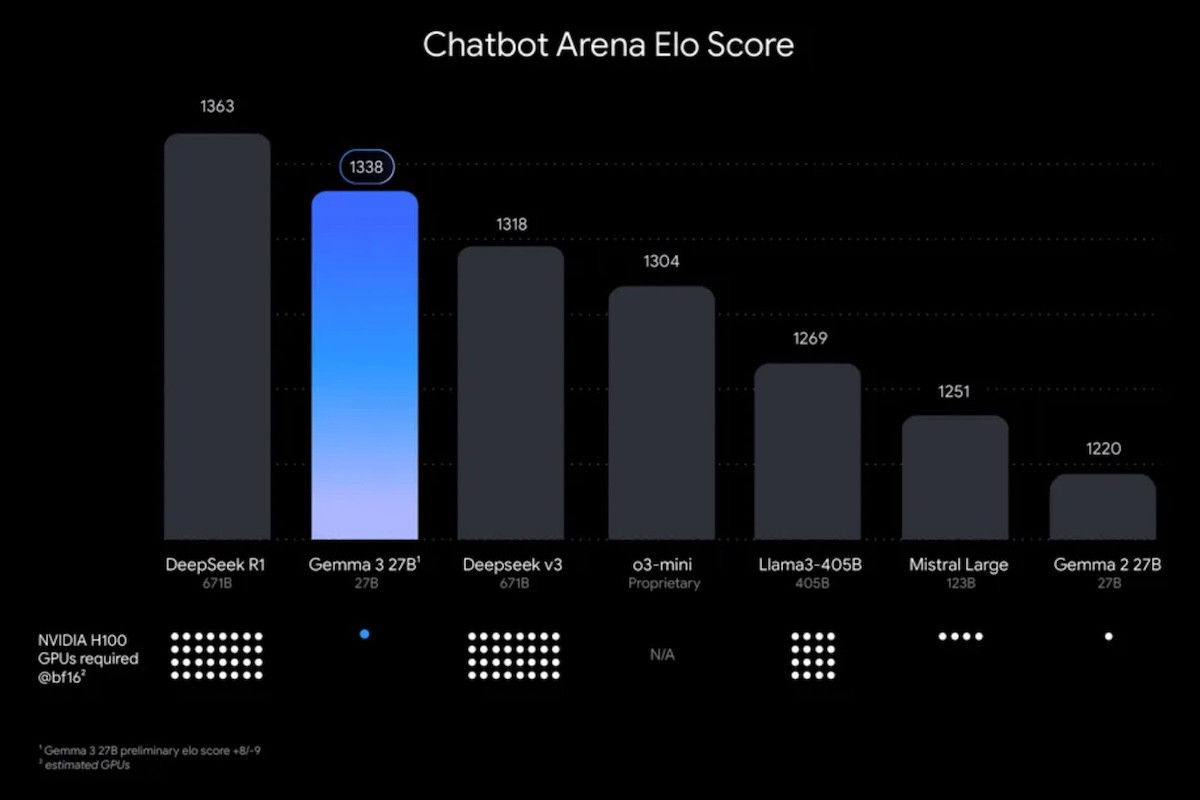

Similar to Gemini 2.0, Gemma 3 can process text, images, and videos. In terms of performance, Gemma 3 outperforms many other open-source AI models, including DeepSeek V3, OpenAI’s o3-mini, and Meta’s Llama-405B.

Google claims Gemma 3’s 27-billion-parameter model is among the most powerful open-source AI models currently available. Benchmarks indicate that it achieves superior efficiency while maintaining competitive accuracy, making it a strong alternative to closed-source AI models in various applications.

Context window equivalent to 200 pages of text

Gemma 3 supports a context window of up to 128,000 tokens, allowing it to process information equivalent to a 200-page book. In comparison, Gemini 2.0 Flash Lite supports up to 1 million tokens, offering an even greater context capacity for handling long-form documents.

In addition to its extended context capabilities, Gemma 3 can interact with external datasets and function as an autonomous AI agent, much like Gemini’s integration with platforms such as Gmail and Google Docs.

Google’s latest open-source AI models can be deployed locally or via its cloud services, such as Vertex AI. Currently, Gemma 3 is available on Google AI Studio and third-party platforms like Hugging Face, Ollama, and Kaggle.

A strategic move in the AI industry

Gemma 3 is part of a broader industry trend, where AI companies are developing both large language models (LLMs) and small language models (SLMs).

Microsoft, Google’s main competitor, is pursuing a similar approach with its open-source Phi SLM models. Small language models like Gemma and Phi are highly efficient in resource utilization, making them ideal for smartphone applications.

With lower latency and reduced power consumption, these models offer significant advantages for mobile AI applications, allowing faster real-time responses and on-device AI processing.

Google’s commitment to open-source AI innovation suggests it aims to balance high-performance AI with accessibility, enabling developers worldwide to integrate advanced AI capabilities into their applications more seamlessly.

The Vinh